Capacity prewarming stops ALB errors instantly

Pre-warming hundreds of Application Load Balancers manually is a cumbersome, error-prone nightmare that automated LCU reservation solves instantly. By using the ELBV2 API, organizations can proactively reserve Load Balancer Capacity Units to guarantee performance when traffic doubles in under five minutes.

The global cloud load balancers market is exploding toward $3.98 billion by 2027, yet many teams still risk outage during critical moments like FSI Capital Markets trading windows or massive ticket sales. AWS documentation confirms that while ALBs scale organically, they cannot instantly provision the massive capacity required for sudden spikes without pre-warming. Manual intervention across a multi-account AWS Organizations environment is simply too slow and risky for modern enterprise demands.

Readers will learn how to architect cross-account automation workflows that eliminate human error during high-stakes events. This approach ensures your infrastructure handles advertising campaigns and product launches with mathematical precision rather than hopeful guessing.

The Critical Role of Provisioned Capacity in ALB Performance

Defining LCU Dimensions and Provisioned Capacity Thresholds

AWS Documentation data shows one Load Balancer Capacity Unit covers 25 new connections per second, 3,000 active connections, 1 GB of data, or 1,000 rule evaluations. This metric defines the processing ceiling where billing aligns with the highest consumed dimension rather than aggregate throughput. CloudZero analysis confirms charges apply to the single peak value across these four vectors each hour. Provisioned capacity establishes a guaranteed minimum floor instead of relying on reactive scaling mechanisms. According to AWS Documentation, operators should reserve capacity when traffic spikes more than double normal volume in under five minutes. The cost implication is distinct: reserved LCUs incur a fixed hourly rate regardless of actual utilization during the window.

| Metric Dimension | Single LCU Limit | Billing Trigger |

|---|---|---|

| New Connections | 25 per second | Peak second |

| Active Connections | 3,000 per minute | Peak minute |

| Processed Data | 1 GB per hour | Total volume |

| Rule Evaluations | 1,000 per second | Peak second |

The limitation involves financial waste if reserved capacity exceeds actual demand profiles. Over-provisioning locks capital into unused compute cycles that auto-scaling would otherwise avoid. This targeted approach prevents blanket over-spending while securing necessary throughput headroom.

Automating ALB Pre-warming for Financial Market Spikes

Application Load Balancers require tagged ELBV2 API calls to reserve capacity before the 9:00 AM market open spike. Manual fleet management becomes cumbersome and error-prone when coordinating hundreds of accounts during volatile trading windows. The mechanism utilizes two AWS Lambda functions triggered by Amazon EventBridge schedulers to scan for specific tags and adjust LCU values dynamically. One function collects ARNs from member accounts into a central DynamoDB table, while the second applies the reserved capacity floor based on the `LCU-SET` tag value. This architecture ensures stock trading platforms maintain sufficient throughput without reactive scaling latency.

AWS Documentation, standard auto-scaling reacts to traffic, while LCU Reservation sets a guaranteed minimum capacity floor.

| Feature | Reactive Auto-scaling | Proactive LCU Reservation |

|---|---|---|

| Trigger Mechanism | Organic metric thresholds | Scheduled EventBridge invocation |

| Latency Risk | Present during cold starts | Eliminated by pre-warming |

| Cost Model | Variable on-demand rates | Fixed hourly reservation fees |

| Best Fit | Steady organic growth | Predictable sharp spikes |

Organic scaling suffices for gradual load increases but introduces latency when volume doubles in under five minutes. The financial trade-off involves paying a fixed rate of $0.008/LCU for reserved capacity versus variable on-demand pricing. A hidden tension exists between cost optimization and availability; reserving too little risks performance degradation, while over-reserving wastes budget on idle capacity. Manual coordination fails at scale because tagging hundreds of Application Load Balancers across accounts requires precise orchestration. Automation via ELBV2 API removes human error from this critical path. Failure to pre-warm results in dropped connections during the initial scaling phase. This gap represents the primary failure mode for high-visibility launches.

Architecture of Cross-Account LCU Automation Workflows

EventBridge Scheduler Triggers for Cross-Account Lambda Execution

Amazon EventBridge Schedulers invoke the initial scan for ALBs tagged ALB-LCU-R-SCHEDULE = Yes hours before traffic surges. This mechanism relies on temporal triggers to start cross-account workflows without manual intervention. The scheduler calls an AWS Lambda function in the management account, which assumes a role via AWS Security Token Service (AWS STS) to read member account metadata. This data, including ARNs and target LCU values from the LCU-SET tag, lands in DynamoDB for downstream processing. InterLIR analysis indicates this decoupling allows the system to handle hundreds of load balancers across complex organizational structures efficiently. However, the dependency on tag consistency introduces operational risk. If the ALB-LCU-R-SCHEDULE = Yes tag is missing or malformed on a critical resource, the scanner skips the asset entirely, leaving it unprotected during peak demand. Unlike reactive scaling, this proactive model offers zero margin for configuration drift once the window closes.

| Component | Function | Trigger Source |

|---|---|---|

| Metadata Collector | Scans tags, stores ARNs | EventBridge (T-minus hours) |

| Capacity Modifier | Applies LCU-SET values | EventBridge (T-minus 1 hour) |

Operators must ensure tag hygiene equals code quality standards to prevent silent failures. The architecture shifts the failure mode from runtime scaling latency to pre-event configuration errors. A single missed tag results in a capacity gap that standard auto-scaling cannot fill fast enough for sub-five-minute spikes.

STS Assume Role Workflow for Centralized ALB Capacity Updates

AWS Security Token Service (AWS STS) Assume Role calls enable the second AWS Lambda function to retrieve stored metadata from DynamoDB one hour before scheduled events. This workflow addresses the specific problem where direct cross-account role access fails without explicit trust relationships set in AWS Identity and Access Management (IAM). The function extracts the LCU-SET tag value and applies it to member account Application Load Balancers using temporary credentials. The mechanism relies on temporal triggers to start cross-account workflows without manual intervention. A single misconfigured tag prevents the central scanner from populating the database, leaving critical trading platforms exposed to latency during market opens.

| Component | Function | Constraint |

|---|---|---|

| STS Assume Role | Grants temporary access | Requires trust policy |

| DynamoDB | Stores ARN metadata | Holds state briefly |

| LCU-SET Tag | Defines capacity floor | Minimum value 100 |

The cost implication involves fixed reservation fees rather than variable scaling charges. Per AWS Documentation, reserved capacity costs $0.0225/hour per LCU compared to standard usage rates. Operators must balance the certainty of pre-warmed capacity against the financial overhead of unused reserved units during non-peak windows. This tension defines the economic viability of automation versus reactive scaling strategies.

Tag Metadata Requirements for Automated LCU Reservation Scaling

Correct automation fails without the ALB-LCU-R-SCHEDULE = Yes tag to identify targets across accounts. The metadata collector function scans member accounts for this specific key-value pair before populating DynamoDB. InterLIR analysis confirms that omitting the LCU-SET tag on identified resources causes the modification workflow to skip those instances entirely, leaving them vulnerable to latency during surges.

| Tag Key | Required Value | Function |

|---|---|---|

| ALB-LCU-R-SCHEDULE | Yes | Trcludes resource in scan scope |

| LCU-SET | Integer > 99 | Defines reserved capacity floor |

Single-account deployments often tolerate manual configuration errors that multi-account architectures cannot absorb. The strict requirement for integer values above a minimum threshold prevents invalid API calls but demands rigorous pre-deployment validation. Operators managing diverse portfolios must ensure tag consistency, as the system treats missing or malformed tags as explicit instructions to ignore the load balancer. This binary failure mode means a single typo in the LCU-SET value results in zero capacity reservation rather than a default fallback. Centralized management relies entirely on this metadata integrity to coordinate AWS Lambda execution across the organization.

Deploying Tag-Based LCU Reservation via CloudFormation

Defining CloudFormation Resources for LCU Automation

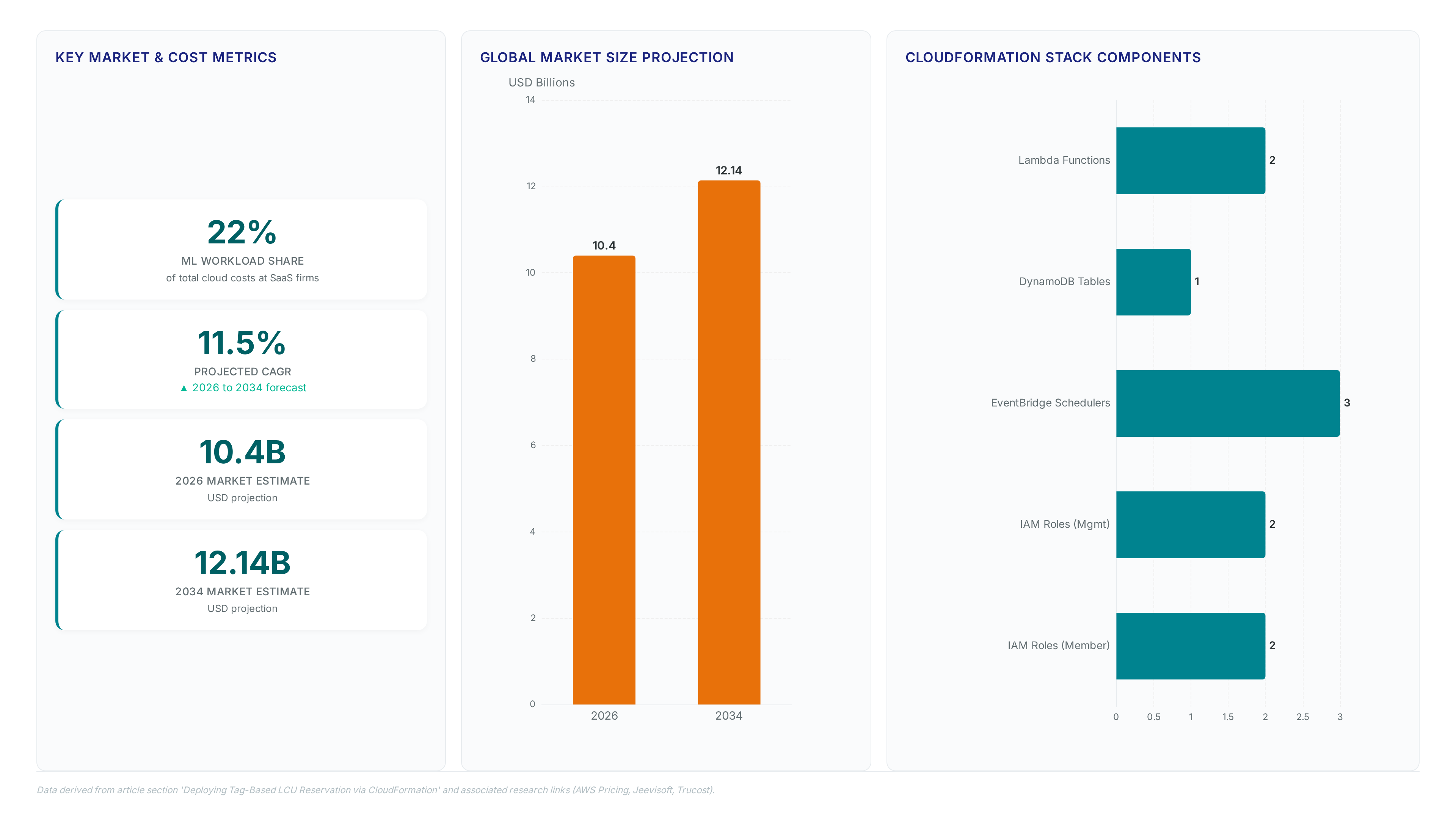

Deploying ALBCapacityAutomationMgmtAccount. Yaml creates two Lambda functions, one DynamoDB table, three EventBridge Schedulers, and two IAM roles.

- The MetadataCollector function scans member accounts for tagged ALBs.

- The LCUModification function adjusts capacity using values from the LCU-SET tag.

- Three EventBridge Schedulers trigger collection, modification, and reset workflows.

- Two IAM roles enable cross-account access via AWS STS Assume Role policies.

Member account deployment via ALBCapacityAutomationMemberAccount. Yaml generates two additional IAM roles with specific trust policies. The architecture separates scanning logic from execution to minimize permission scope across the organization. InterLIR analysis indicates this split reduces the blast radius of credential compromise during high-frequency updates. However, relying on tag consistency introduces a single point of failure if naming conventions drift. Operators must enforce strict tag governance because missing ALB-LCU-R-SCHEDULE keys exclude resources from automation entirely. The cost implication involves balancing the overhead of maintaining these CloudFormation stacks against the risk of manual pre-warming errors during market opens. While the templates standardize deployment, the operational burden shifts to monitoring tag compliance rather than managing capacity directly.

Configuring ALB Tags for Scheduled Capacity Updates

Tagging Application Load Balancers with ALB-LCU-R-SCHEDULE = Yes initiates the metadata scan across the organization. Operators must apply two distinct tags to every target resource before the EventBridge scheduler triggers. 1. Set the key ALB-LCU-R-SCHEDULE to the value Yes to include the balancer in the discovery scope. 2. Assign the key LCU-SET an integer value representing the guaranteed capacity floor. The modification Lambda reads the LCU-SET integer to provision the exact reservation level via the ELBV2 API. AWS documentation identifies event ticket sales and product launches as primary use cases requiring this precision. However, setting the LCU-SET value too high creates waste if actual traffic fails to double normal volume. InterLIR recommends validating tag syntax via CLI before production deployment to prevent silent failures. A missing tag excludes the resource from the DynamoDB inventory, leaving it unprotected during the scheduled window.

Incorrect tagging logic creates a false sense of security where operators assume protection exists without verification.

Mitigating IAM Role Conflicts in Multi-Account Deployments

Conflicting IAM role names in member accounts trigger immediate STS Assume Role failures during automation execution. The management account requires Organizations enrollment for all targets to prevent cross-account access denial. Absent active enrollment, the MetadataCollector function cannot retrieve ALB attributes or write to DynamoDB. Name collisions between local roles and deployment templates break the trust chain required for the LCUModification workflow. Operators must audit existing role namespaces before applying the ALBCapacityAutomationMemberAccount. Yaml stack. InterLIR evaluation indicates that naming conflicts are the primary cause of silent failures in large-scale deployments where manual pre-warming previously masked permission gaps. The tension exists between standardized naming conventions and legacy infrastructure requirements. Remediation requires renaming local roles or adjusting the template policy suffixes. This prerequisite ensures the central scheduler maintains visibility across the entire fleet.

- Verify Organizations status shows all member accounts as Active.

- Scan member accounts for existing roles matching the deployment template names.

- Modify the CloudFormation parameters if role name customization is required.

- Apply the member account stack only after namespace validation completes.

Operational ROI and Cost Efficiency of Automated Pre-Warming

Defining Operational ROI in Automated LCU Pre-based on Warming

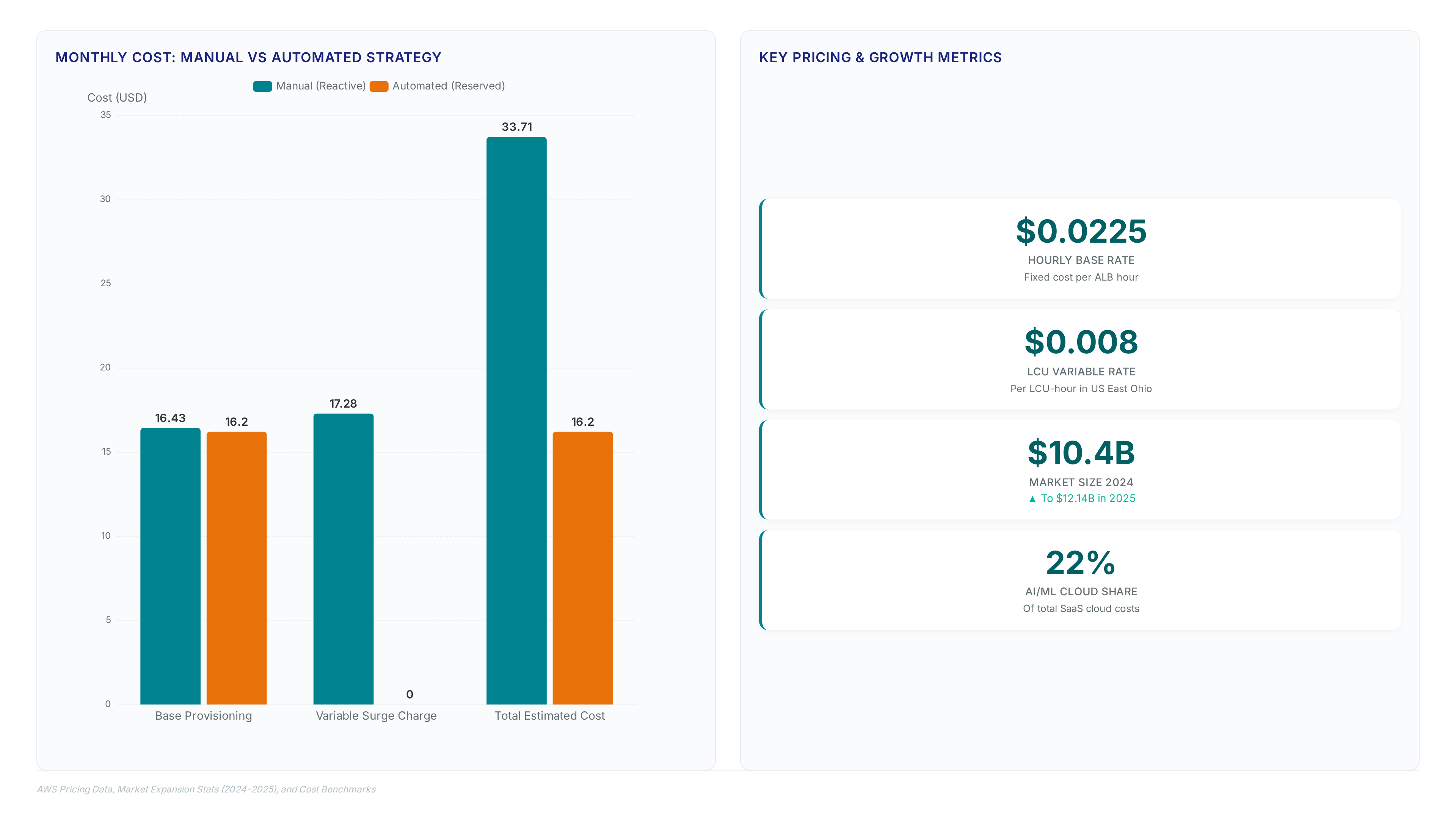

Amazon Web Services, the base hourly rate sits at $0.0225 per hour, totaling roughly $16.20 to $16.43 per month just for provisioning. This fixed cost exists regardless of traffic volume, creating a financial floor for every Application Load Balancer deployment. Operators asking should I use LCU reservation must analyze the variable component where costs escalate during surges. According to Amazon Web Services, the cost per LCU in the US East (Ohio) region is $0.008 per LCU-hour. Unchecked spikes convert predictable operational expenditure into volatile charges that strain budget forecasts. Automation shifts this model by reserving capacity ahead of known events rather than reacting to metered usage.

Manual Versus Automated LCU Management Cost Efficiency

Market expansion from $10.4 billion in 2024 to $12.14 billion in 2025 drives the shift from manual LCU management to automated reservation strategies. Operators relying on human intervention face unavoidable latency during traffic spikes, whereas code-driven provisioning guarantees capacity availability. The financial divergence becomes apparent when contrasting reactive scaling against proactive commitment models.

| Feature | Manual Scaling | Automated Reservation |

|---|---|---|

| Response Time | Reactive (minutes) | Proactive (scheduled) |

| Error Rate | High (human-dependent) | Near-zero (code-set) |

| Cost predictability | Variable | Fixed floor |

| Operational overhead | Continuous monitoring | Event-driven execution |

According to Amazon Web Offerings, LCU reservations combine with Savings Plans to create a complete savings strategy that manual processes cannot replicate efficiently. This integration is vital as AI workloads consume significant cloud budgets. The limitation remains that automation demands strict tag governance; missing ALB-LCU-R-SCHEDULE tags exclude resources from the workflow entirely. Consequently, organizations adopting this approach must enforce naming conventions before deployment. InterLIR assessment indicates that without centralized control, multi-account environments suffer from inconsistent capacity floors. Total cost ownership improves only when operators eliminate the variability of on-demand LCU-hour charges during known surge windows. The cost is the initial effort required to configure EventBridge schedulers and Lambda permissions correctly. Failure to automate results in paying premium rates for capacity that could have been reserved at a lower proven cost. Strategic pre-warming transforms variable expense into a predictable operational constant.

About

Nikita Sinitsyn Customer Service Specialist at InterLIR brings a unique operational perspective to the complexities of AWS Application Load Balancer management. While InterLIR specializes in optimizing global network availability through IPv4 resource redistribution, Nikita's daily work involves ensuring smooth connectivity and resolving critical infrastructure challenges for diverse clients. This hands-on experience with network stability and high-traffic environments directly informs the necessity of automating Capacity Unit (LCU) reservations. Understanding that unexpected traffic surges can disrupt services just as quickly as IP shortages, Nikita applies his eight years of telecommunications support expertise to advocate for proactive capacity planning. By using ELBV2 API automation, organizations can mirror the efficiency InterLIR provides in IP allocation, ensuring Application Load Balancers remain resilient without manual intervention. This approach bridges the gap between raw network resources and the dynamic scaling required in modern cloud architectures, reflecting a shared commitment to operational excellence and reliable service delivery.

Conclusion

Scale breaks the illusion of infinite elasticity when sudden traffic surges trigger cascading on-demand premiums that erode margins instantly. While the market expands rapidly, relying on reactive scaling leaves organizations vulnerable to latency spikes and unpredictable billing shocks that manual intervention simply cannot mitigate fast enough. The operational debt accumulates silently until a flash sale or marketing event converts a manageable budget into a financial incident. You must transition to automated reservation strategies immediately if your infrastructure experiences predictable load patterns exceeding two hours daily. Waiting beyond the next fiscal quarter locks you into inefficient spend cycles that compound as AI workloads intensify demand.

Start by auditing your current EventBridge schedules against historical traffic logs this week to identify at least three recurring surge windows where manual scaling currently dominates. Implement a strict tagging protocol for these specific timeframes before attempting any code deployment, as missing metadata will render your automation logic inert. This fundamental step ensures that your shift from variable chaos to fixed predictability actually functions when the pressure hits. Ignoring this structured approach guarantees you will continue subsidizing cloud provider profits with unnecessary overhead rather than investing in genuine innovation.