Interconnect cuts AWS-to-Google setup to minutes

AWS Interconnect eliminates weeks of manual cross-connect provisioning by enabling private Layer 3 links to Google Cloud in minutes. This shift marks the end of cumbersome colocation dependencies for enterprises demanding smooth multi-cloud architecture. The service fundamentally abstracts the physical network layer, replacing fragile VPN tunnels and third-party fabrics with a unified, quad-redundant backbone.

Readers will examine how Interconnect multi-cloud leverages jointly engineered standards between AWS and Google Cloud to automate route propagation without physical hardware. Furthermore, the analysis covers the strategic pivot away from legacy approaches that still plague Azure and Oracle users relying on manual colocation facilities.

While Microsoft Azure and Oracle Cloud Infrastructure support arrives later in 2026, the current integration with Google Cloud sets a new baseline for network resiliency. Robert Kennedy, VP of network services at AWS, emphasizes that this standard removes the complexity of physical components entirely. By fusing high-availability directly into the service definition, organizations can finally bypass the heavy lifting previously required to establish secure, high-speed data flows between disparate cloud environments.

The Role of AWS Interconnect in Modern multi-cloud Architecture

AWS Interconnect multi-cloud and Last Mile Capabilities Set

Apr 29, 2026 marks the general availability of AWS Interconnect, a managed private connectivity service. This architecture establishes Layer 3 paths between AWS VPCs and external clouds like Google Cloud without physical cross-connects. The definition separates two distinct operational modes: Interconnect multi-cloud for cloud-to-cloud traffic and Interconnect last mile for branch office access via providers such as Lumen Technologies. Legacy VPN tunnels required weeks of colocation coordination, yet this model automates BGP propagation and MACsec encryption through the Direct Connect console. A strict dependency on matching MTU values across peered environments prevents silent packet fragmentation within the abstraction layer. Operators must manually align Maximum Transmission Unit settings because default values differ between hyperscalers, creating a hidden failure mode absent in previous manual setups. Operational risk shifts from physical provisioning delays to configuration drift in logical parameters.

| Feature | Interconnect multi-cloud | Interconnect Last Mile |

|---|---|---|

| Primary Use Case | Cloud-to-Cloud routing | Branch-to-Cloud access |

| Topology | VPC to External VPC | On-premises to AWS |

| Provisioning Source | AWS Direct Connect Console | Network Provider Portal |

| Encryption Default | MACsec enabled | MACsec enabled |

InterLIR documentation notes that while the service guarantees 99.99% availability, the automated nature of the activation key exchange removes visibility into underlying physical path diversity. Provisioning completes in minutes via the AWS Direct Connect console, eliminating weeks of manual cross-connect coordination. According to InfoQ, this workflow abstracts physical routers and VLAN attachments into a managed transport resource. Operators select source and destination regions, specify bandwidth, and input a Google Cloud project ID to generate an activation key. Routes propagate automatically in both directions using BGP, removing manual peer configuration steps. This automation contrasts sharply with Interconnect last mile, which connects on-premises branches to AWS through participating network providers rather than linking two public clouds directly. Traffic endpoint location dictates the choice between these modes. East-west replication between hyperscalers suits multi-cloud, while north-south enterprise access needs fit last mile. Mismatched Maximum Transmission Unit values between peered VPCs cause silent data loss despite successful BGP establishment. The abstraction layer hides physical redundancy details, trusting the quad-redundant design across facilities instead of explicit router management.

Meanwhile, interLIR documentation notes that MACsec encryption applies by default to both modes, securing data in transit without additional tunnel overhead. Reduced visibility into underlying physical link states occurs compared to traditional dedicated interconnects. Speed increases while granular control over the physical layer topology decreases.

Managed Interconnect Versus Manual VPN and Colocation Workflows

InfoQ data shows legacy cross-cloud links previously required weeks of manual third-party fabric coordination before this release. The AWS-Google Cloud launch represents a jointly engineered solution that replaces physical colocation dependencies with automated provisioning logic. Operators now select regions and input a project ID to generate an activation key rather than scheduling rack space. Routes propagate automatically using BGP, eliminating the sustained labor costs associated with tunnel maintenance. Microsoft Azure currently lacks an equivalent native managed interconnect for AWS, forcing continued reliance on third-party peers for those specific paths. An asymmetric operational model emerges where Azure-to-AWS traffic still demands external orchestration layers unlike the direct Google path.

| Feature | Legacy Workflow | AWS Interconnect |

|---|---|---|

| Provisioning Time | Weeks | Minutes |

| Physical Layer | Colocation Cross-connects | Abstracted |

| Routing Config | Manual BGP Peering | Automatic Propagation |

| Azure Support | Via Third Parties | Not Native Yet |

Coordinating with facility managers is no longer necessary, yet a strict vendor-lock dependency on the Direct Connect console for lifecycle management appears. Network teams gain speed but lose the ability to insert custom physical handoffs that were possible in shared colocation environments. The decision to choose last mile over Direct Connect depends entirely on whether the endpoint is a branch office or another public cloud provider. Deployment velocity accelerates through automation while visibility into the underlying transport mechanics reduces compared to manual setups.

Inside the Mechanics of Cross-Cloud Data Flow and Encryption

BGP and MACsec Encryption in AWS-Google Data Flow

Traffic flows entirely over the AWS global backbone and Google Cloud private networks, bypassing public internet exposure according to usage. Ai data. This path isolation ensures that BGP route propagation occurs exclusively between trusted edge routers within the jointly engineered fabric. Operators receive automatic bidirectional updates without manual peer session configuration or persistent tunnel management overhead. Every physical link utilizes IEEE 802.1AE MACsec encryption by default to secure data in transit between provider facilities. The architecture distributes logical links across at least two physical facilities to achieve quad-redundancy as documented by Amazon. Com/interconnect/multi-cloud/. This design prevents single points of failure from disrupting cross-cloud availability during hardware outages or maintenance windows. However, the operational simplicity introduces a strict dependency on matching MTU values across peered environments to avoid silent packet fragmentation. Default settings often differ between clouds, requiring manual alignment before production traffic initiation to prevent throughput degradation.

Failure to align these parameters results in immediate connectivity loss despite successful BGP session establishment.

MTU Mismatch and Silent Data Loss in Peered VPCs

Default MTU divergence between AWS and Google Cloud triggers silent data loss when jumbo frames traverse peered VPC boundaries without validation. Silent packet fragmentation occurs because AWS Direct Connect interfaces often default to 9001 bytes while standard Ethernet caps at 1500 bytes. InterLIR analysis indicates that unvalidated IP range overlaps compound this risk by breaking BGP route propagation entirely. Operators must manually verify Maximum Transmission Unit alignment before enabling production traffic flows across the multi-cloud fabric.

| Failure Mode | Root Cause | Operational Impact |

|---|---|---|

| MTU Mismatch | Divergent interface defaults | Silent data loss for large packets |

| IP Overlap | Duplicate CIDR blocks | Complete routing table rejection |

| BGP Flap | Unreachable next-hops | Intermittent connectivity blackholes |

The cost of ignoring these checks is total path failure rather than degraded performance. However, resolving configuration errors requires CLI intervention on the Google Cloud side since a web console for provisioning remains unavailable at general availability. This manual dependency slows remediation during active incidents compared to fully automated AWS workflows.

- Audit CIDR blocks on both cloud sides to prevent address space collisions.

- Force interface MTU settings to match the lowest common denominator if jumbo frames are not end-to-end.

- Validate bidirectional reachability using synthetic monitoring tools before shifting live workloads.

Network teams relying on automatic BGP propagation without pre-checks face immediate outages upon activation. The architectural benefit of managed cross-connects disappears if Layer 3 parameters remain inconsistent between providers. Precise configuration prevents the silent corruption that standard ping tests often miss.

CLI Provisioning and Transit Gateway Aggregation Workflows

Google Cloud provisioning demands CLI execution at GA because the web console lacks support, forcing operators to script Cross-Cloud Interconnect activation manually. InfoQ data confirms this gap requires command-line interaction to generate the necessary activation key on the Google side. This manual step introduces friction absent in the AWS Direct Connect console workflow. The limitation is operational asymmetry; teams must maintain Google-specific automation scripts while AWS uses native GUI tools. A unified interface remains unavailable for the Google path. Regional scaling relies on AWS Transit Gateway to aggregate multiple VPC attachments into a single routing hub. This architecture simplifies topology by centralizing route propagation rather than managing mesh peering. InterLIR analysis indicates that centralized hubs reduce configuration errors during multi-cloud expansion phases. Operators gain a clean star topology but lose direct path visibility between specific spoke VPCs. Traffic engineering becomes policy-driven rather than link-driven.

| Workflow Stage | AWS Method | Google Cloud Method |

|---|---|---|

| Provisioning | Direct Connect Console | Command Line Interface |

| Aggregation | Transit Gateway Hub | Manual Peering or Hub |

| Route Sync | Automatic BGP Propagation | Automatic BGP Propagation |

The trade-off for this aggregation model is reduced granular control over individual attachment metrics within the hub. Global designs extend this pattern using AWS Cloud WAN for segment-based routing across regions. Network teams must verify MTU alignment before enabling jumbo frames to prevent silent packet drops.

Strategic Advantages of Managed Interconnect Over Legacy Approaches

according to AWS Interconnect vs Azure ExpressRoute Managed Service Models

Competitive Environment and Technical Specifications, AWS Interconnect operates as a jointly engineered Layer 3 service while Microsoft Azure relies on customer-managed routing for cross-cloud paths. This architectural divergence forces operators to choose between native automation and third-party dependency. AWS Interconnect abstracts physical cross-connects through direct API integration, whereas ExpressRoute requires external providers like Megaport or Equinix to bridge gaps to AWS or Google Cloud. The result is a binary operational model: fully managed workflows versus fragmented manual coordination.

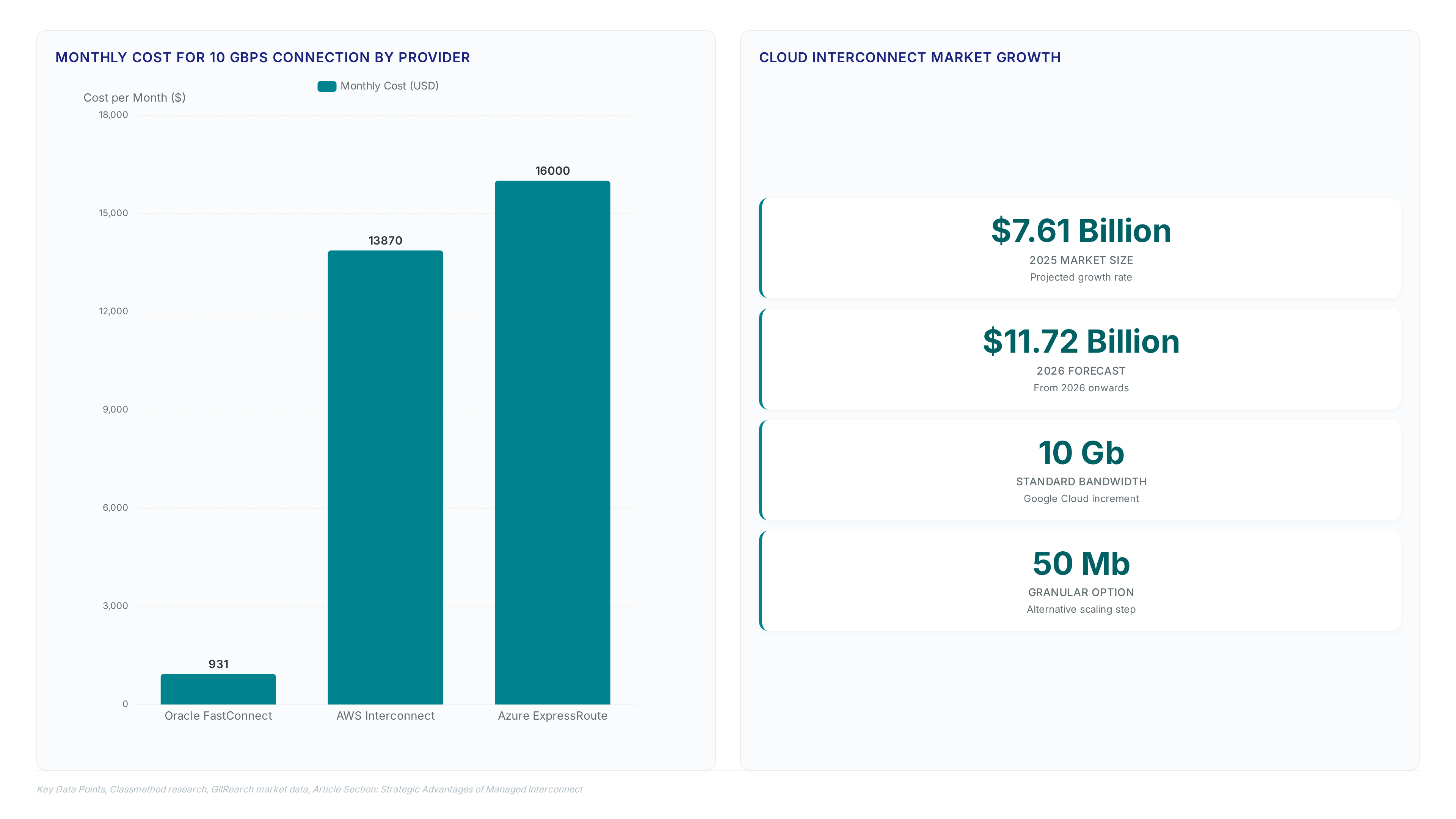

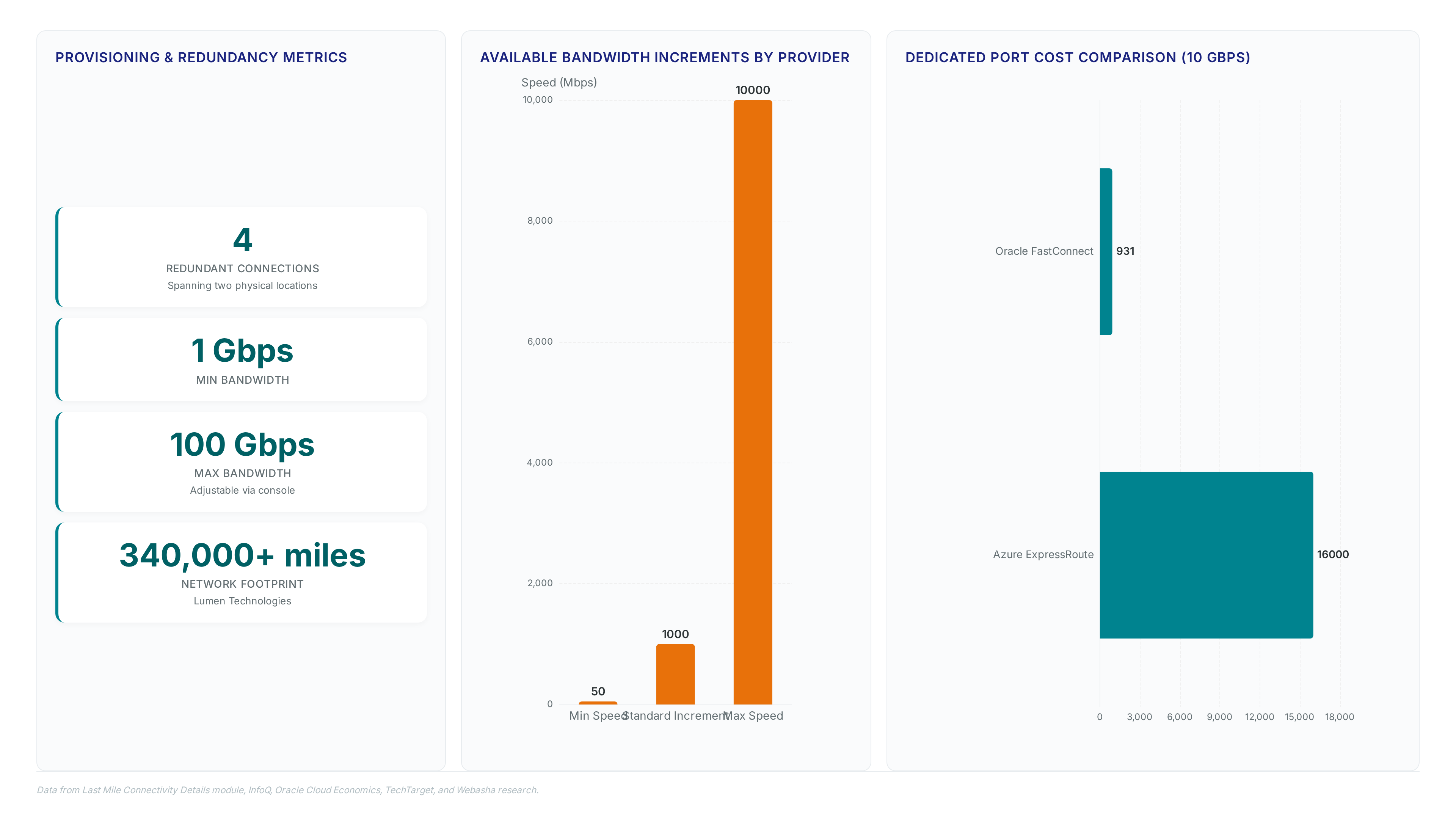

Google Cloud Dedicated Interconnect typically offers bandwidth in 10 Gb increments according to industry specifications, creating rigid scaling steps compared to granular options elsewhere. However, the reliance on external partners for Azure connectivity introduces latency variability that internal backbones avoid. A critical tension exists where organizations prioritizing multi-vendor flexibility sacrifice the deterministic performance found in jointly engineered fabrics. Operators managing hybrid estates face increased complexity maintaining consistent security postures across disparate provider handoffs. InterLIR analysis indicates that this fragmentation prevents unified telemetry visibility, leaving blind spots during incident response. The strategic cost involves higher operational overhead to reconcile disjointed routing domains.

Simplified Billing with Flat Hourly Rates Versus Complex Per-GB Fees

The AWS pricing documentation defines the single-fee structure as a consolidated capacity tier charge that eliminates separate VLAN attachment and port hour line items. This model removes complex per-GB data transfer fees often associated with cross-cloud traffic between distinct provider backbones. In contrast, third-party multi-cloud providers such as Megaport or Equinix frequently layer port costs over variable data consumption metrics. Azure ExpressRoute requires customer-managed routing for non-Microsoft clouds, forcing operators to pay external vendors for connectivity that AWS Interconnect includes natively.

InterLIR assessment indicates that flat-rate billing reduces financial forecasting errors caused by unpredictable traffic bursts during replication events. The limitation is reduced granularity; organizations with extremely low intercloud utilization may pay a premium for unused capacity tiers compared to strict pay-as-you-go models. Operators must calculate baseline throughput requirements accurately before selecting a fixed capacity tier to avoid overspending on idle bandwidth. Financial planning becomes more predictable when variable spikes do not trigger unexpected invoices from multiple vendors.

Dedicated 10 Gbps Connection Cost Comparison Across Cloud Providers

Oracle Cloud Infrastructure FastConnect costs approximately $931/month, establishing a low-cost baseline for dedicated capacity. This figure contrasts sharply with hyperscaler-managed multi-cloud options where automation commands a premium. AWS Interconnect multi-cloud carries an approximate monthly charge of $9,000.90 according to Key Data Points data, reflecting the value of native console integration and automatic BGP propagation. Microsoft Azure ExpressRoute demands roughly $16,000/month for equivalent 10 Gbps throughput, though this path often necessitates third-party routing fabric for non-Microsoft destinations. Google Cloud Partner Cross-Cloud Interconnect sits between these extremes at $13,870.00/month per Classmethod research, highlighting significant price variance for similar dedicated capacities. Lower list prices from Oracle ignore the engineering hours required to manage disjointed physical cross-connects manually. Conversely, the higher fees for AWS and Google include the jointly engineered specification that removes physical component complexity entirely. Operators must weigh immediate port costs against long-term labor expenditure for fault isolation. The cheapest port often yields the most expensive operational model when staff hours factor into the equation.

Provisioning High-Availability Connections via Console Automation

Last Mile Connectivity Architecture and Redundancy Model

Data from the Last Mile Connectivity Details module reveals a system that automatically provisions four redundant connections spanning two physical locations. This setup enforces a strict quad-redundancy model to remove single points of failure within the access layer. The platform configures BGP routing and activates MACsec encryption by default, securing the edge without requiring manual policy injection. Bandwidth scales from 1 Gbps to 100 Gbps, adjustable via the console without requiring physical reprovisioning. Lumen Technologies acts as the initial network operator, using a footprint exceeding 340,000 miles to deliver these high-speed links. AT&T also collaborates to support latency-sensitive workloads through fixed wireless and fiber assets.

The true constraint lies not in bandwidth capacity but in the physical reach of the participating providers.

Application: Step-by-Step multi-cloud Provisioning via Direct Connect Console

InfoQ reports AWS Interconnect multi-cloud provisioning completes in minutes through the Direct Connect console, bypassing weeks of manual cross-connect coordination. Operators select the cloud provider, define source and destination regions, and input a Google Cloud project ID to generate an activation key. Traffic flows entirely over private backbones, never traversing the public internet. Google Cloud lacks a web console for this specific integration at general availability, forcing reliance on CLI commands for final activation on the GCP side. This asymmetry introduces a procedural dependency where AWS-side automation halts until manual script execution occurs upstream.

Application: as reported by MTU Mismatch Risks and Silent Data Loss in Peered VPCs

Last Mile Connectivity Details, activation of Jumbo Frames by default, creating an immediate MTU mismatch risk against Google Cloud defaults. Operators configuring peered VPCs must manually align frame sizes because AWS enables larger payloads while peer networks often retain standard Ethernet limits. This configuration gap causes silent packet fragmentation where large TCP segments drop without generating ICMP error messages or visible interface counters. Traffic appears to flow during initial handshake but fails under load when applications attempt to transmit data blocks exceeding the smaller peer MTU. Network engineering teams face a mandatory pre-deployment audit of end-to-end path characteristics before enabling production workloads. InterLIR recommends validating maximum segment size negotiation during the provisioning window rather than relying on automatic discovery protocols. Failure to explicitly configure matching values on both cloud consoles results in degraded throughput that mimics congestion but stems from layer-2 framing incompatibility. Manual alignment via CLI remains the only reliable mitigation strategy given the current lack of cross-cloud parameter synchronization.

About

Vladislava Shadrina Customer Account Manager at InterLIR brings a unique perspective to the evolution of AWS Interconnect. While her background includes architecture, her daily work at InterLIR focuses on optimizing network infrastructure through efficient IP resource management. This role requires deep familiarity with BGP routing, IP reputation, and the critical need for reliable connectivity that modern enterprises demand. As AWS launches Interconnect multi-cloud and last-mile solutions, Shadrina's experience helping clients secure clean, transparent network resources directly correlates to the challenges these new services address. At InterLIR, a Berlin-based leader in IPv4 marketplace solutions since 2020, she observes firsthand how fragmented connectivity impacts business operations. Her insights bridge the gap between raw IP allocation and the sophisticated, managed private connections now offered by AWS, highlighting why streamlined, multi-provider integration is essential for scalable global networks today.

Conclusion

The real fracture point for AWS Interconnect emerges not during provisioning, but when operational complexity outpaces manual oversight. While the service promises high-availability, the hidden tax lies in the continuous labor required to maintain cross-cloud parity, especially as bandwidth scales beyond initial 10 Gb increments. Organizations often underestimate that saving on monthly transit fees merely shifts the cost burden to specialized engineering hours needed to prevent silent data loss from MTU mismatches. You cannot treat hybrid networking as a "set and forget" utility; it demands rigorous, ongoing parameter validation that most teams are ill-equipped to sustain indefinitely without automation.

Adopt a hybrid-first strategy only if your workload requires sustained throughput exceeding 5 Gbps with latency sensitivity under 2ms, and commit to a six-month automation roadmap before scaling further. Do not proceed if your team lacks dedicated network engineers capable of scripting CLI-based verifications, as console-only management will inevitably lead to undetected packet fragmentation. Start by auditing end-to-end MTU settings across all peered VPCs this week using manual CLI commands rather than relying on dashboard status indicators. This immediate verification prevents the deceptive appearance of healthy traffic flows that collapse under production load. Until you can guarantee consistent frame alignment programmatically, treat every interconnect attachment as a temporary bridge requiring daily human intervention, not a permanent backbone.