Private CloudFront origins cut public IP exposure

You can eliminate public IP exposure entirely by using the CloudFront VPC origins feature announced in November 2024. This architectural shift transforms content delivery networks from simple caches into thorough security platforms, a trend Signisys notes is accelerating with 2025-2026 rollouts of mTLS authentication and AI traffic dashboards. By creating a managed connection inside your Virtual Private Cloud, organizations bypass the public internet completely, rendering complex workarounds like custom header rotations and rigid IP whitelisting obsolete.

Whether you operate a centralized model with a dedicated networking account or a distributed architecture where resource accounts manage their own CloudFront distributions, the ability to restrict access controls at the edge layer is critical. Readers will learn to navigate the trade-offs between centralized and distributed deployment models while executing zero-downtime migrations. We will cover specific configuration requirements, including VPC Block Public Access exclusions and the precise IAM permissions needed to establish these trusted pathways. Finally, we detail how to implement continuous deployment policies that maintain strict security postures even as origin infrastructure scales across multiple availability zones.

The Role of Private VPC Origins in Modern Cloud Security Architecture

CloudFront VPC Origin Architecture and ENI Mechanics

Managed Elastic Network Interface resources inside customer VPCs route traffic for CloudFront VPC origins according to repost. Aws data. Direct backbone connectivity replaces public IP exposure within this design. An interface deployed inside the origin private subnet consumes one IPv4 address based on towardsaws. Com information. Operators reserve this specific address to prevent provisioning failures during deployment windows. Immediate isolation occurs because origins accept no direct internet ingress once the ENI becomes active. Traffic flows exclusively over the AWS network while removing the attack surface associated with public endpoints. Strict dependency on subnet capacity planning defines this architecture. Target subnets lacking available IPs cause VPC origin creation to fail silently or time out. Private models rely entirely on security group membership for access control unlike public origins requiring complex firewall rules.

Operational rigidity defines the trade-off as architects lose dynamic public addressing flexibility in exchange for reduced DDoS risk. Automation pipelines stall when teams fail to account for the single IP requirement per origin. Internal segmentation policies gain importance while perimeter filtering devices become unnecessary. Network teams adjust monitoring practices to track private link health rather than external reachability metrics.

Private Application Load Balancers (ALBs) link distributions through data from AWS Resource Access Manager. Backend resources remove public internet exposure because configuration routes traffic exclusively over the AWS backbone. Centralized architectures place distribution management inside a networking account while origin infrastructure resides in separate resource accounts. Security groups restrict ingress strictly to CloudFront-managed ranges according to Prerequisites data. Cross-account sharing via AWS RAM bridges networking and resource boundaries for this mechanism. Operators define Network Load Balancers (NLBs) or ALBs inside private subnets as termination points. A critical tension exists between strict subnet isolation and available IPv4 capacity since the ENI consumes an address that must remain reserved. InterLIR analysis indicates that failing to reserve this specific IP causes provisioning timeouts during high-velocity deployment windows.

Measurable costs arise from rigid security group policies because misconfigurations block legitimate health checks entirely. Most operators validate connectivity using header-based routing before shifting weight. Traffic shifts can begin at 0% and incrementally rise to 15% during testing phases. This approach ensures zero-downtime transitions while verifying backend readiness. Operational complexity increases as coordinating IAM permissions across accounts adds latency to initial setup cycles.

Centralized models utilize AWS RAM for cross-account sharing, a capability introduced in November 2025 per Prerequisites data. This architecture places CloudFront distributions in a dedicated networking account while origin infrastructure like Application Load Balancers (ALBs) resides in separate resource accounts. Distributed models require every resource account to manage its own distribution and origin infrastructures independently. The mechanism relies on AWS Resource Access Manager to bridge these boundaries without exposing private IPs to the public internet. InterLIR analysis indicates that centralized control simplifies policy enforcement but creates a single point of configuration failure. Operational friction increases because teams must coordinate security group updates across account lines rather than within a single boundary. Distributed models eliminate this coordination tax at the expense of consistent security posture application. Large-scale deployments favor centralization to maintain uniform DDoS mitigation policies according to most operators. Strict requirement for AWS RAM publication remains the limitation enabling underlying connectivity. Failure to publish these shares blocks the Elastic Network Interface creation entirely. Team maturity dictates whether organizations choose management efficiency or architectural autonomy.

Internal Mechanics of Cross-Account VPC Connectivity and Traffic Routing

AWS RAM Cross-Account Sharing Mechanics for VPC Origins

VPC origin support remains restricted to AWS Commercial Regions, creating an immediate deployment constraint for non-standard environments. The mechanism utilizes AWS Resource Access Manager to establish a trusted boundary where a resource owner account shares the private Application Load Balancer or Network Load Balancer with the centralized networking account hosting CloudFront. This sharing model requires explicit acceptance of the resource share before traffic routing can commence across account lines. Operational friction often arises from synchronizing security policies across distinct administrative domains. Unlike single-account deployments, cross-account sharing demands that ingress rules on the origin security group explicitly reference the managed service identifiers from the consuming account. A failure to align these trust boundaries results in silent packet drops rather than explicit connection refusals. Operators must treat the resource share acceptance as a primary path dependency in any automation pipeline. Neglecting this handshake step renders the Elastic Network Interface unreachable despite successful provisioning in the source account. The architecture enforces strict isolation, meaning debugging requires coordinated access logs from both the producer and consumer accounts.

Configuring Security Groups and Layer 7 Protection for Private Origins

Restricting security group ingress to CloudFront-managed ranges eliminates direct internet exposure according to Prerequisites data. This mechanism replaces public IP dependencies with strict layer-3 filtering, forcing all traffic through the AWS backbone. Operators must define explicit allow-lists for HTTP and HTTPS ports within the private subnet boundaries. Deploying AWS Shield Advanced alongside AWS WAF provides the necessary intelligent threat mitigation for these private endpoints. The cost is operational complexity; layering multiple protection services increases the configuration surface area for rule conflicts. Network teams must balance tight access controls against the latency introduced by deep packet inspection chains. A hidden tension exists between aggressive bot control and legitimate scraper availability during migration windows. Most operators overlook that Layer 7 rules applied at the edge do not automatically propagate to origin-level logging without specific CloudWatch metric configurations. Skipping this validation creates a self-inflicted denial of service where protective measures starve the application of valid telemetry. Successful deployment requires synchronizing security policies across both the distribution and the underlying Application Load Balancer.

Migration Prerequisites: Origin Shield, Quotas, and Pricing Validation

Other considerations documentation data shows Origin Shield remains functional for private VPC origins if previously enabled for public endpoints. The mechanism preserves the caching hierarchy by allowing the shield to fetch from private IPs without reconfiguration. A critical tension exists between maintaining existing cache hit ratios and the requirement to recreate origin groups for new private mappings. Operators often overlook that origin group logic does not migrate automatically; manual remapping is mandatory to prevent traffic from falling back to failed public nodes. This operational gap introduces a transient risk window where failover paths remain undefined until the new groups are explicitly bound to the distribution. Consolidated billing offers a distinct financial advantage when shifting architectures. According to Other considerations documentation, data transfer between CloudFront and AWS origins is automatically waived, providing a consolidated monthly price rather than separate line items. This eliminates inter-region or cross-AZ data transfer charges that typically accumulate in complex topologies. However, quota validation remains a silent failure point during rapid scaling events.

Executing Zero-Downtime Migrations with Continuous Deployment Policies

CloudFront Continuous Deployment as Blue-as reported by Green Migration Strategy

Migration strategies, continuous deployment functions as a blue-green approach by creating a staging distribution that mirrors production while the primary remains active. This mechanism isolates the new VPC origin configuration from live traffic, allowing operators to validate connectivity through the AWS backbone without impacting end users. The process begins with Header-based routing to tag specific requests for the staging environment, ensuring only controlled tests reach the private infrastructure. Once validation confirms stability, the policy shifts to Weight-based routing to gradually introduce production load.

- Configure the staging distribution with private origin settings while the primary serves public traffic. 2.

Meanwhile, per migration strategies, testing begins with Header-based type by tagging traffic before shifting to weighted splits. Operators first configure the staging distribution with new VPC origin settings while the primary continues serving public traffic. The continuous deployment policy updates to Weight-based type to route a specific percentage of production load to the private infrastructure. Session stickiness remains active during this phase, maintaining user connections until the viewer session closes completely. A critical tension exists between cache warming and traffic volume; the staging cache starts empty, so low initial weights prevent origin overload while headers validate pathing. Disabling the policy resets all active sessions, a side effect that demands coordination with application teams during maintenance windows. HTTP3 support is absent for these policies, forcing fallback to TCP-based transports for all routed requests. The cost of misconfiguration involves potential session loss rather than financial penalty, as data transfer between CloudFront and AWS origins remains waived. ### Post-based on Migration Cleanup and Cost Verification Steps

Cleaning up, operators must delete unused ALBs or EC2 instances only after verifying DNS records point exclusively to the new VPC origin. Promoting the staging configuration to production requires a final validation sweep to ensure no legacy public endpoints remain active in the CloudFront console. The cost implication involves monitoring AWS Cost Explorer for 24–48 hours to confirm charges for decommissioned public resources have ceased entirely. A specific tension exists between rapid resource deletion and the latency of billing data; premature termination of logging services can obscure final cost attribution.

- Confirm all traffic routes through the private interface using flow logs.

- Delete the original public-facing load balancers and associated Elastic IPs.

- Remove obsolete NAT Gateway configurations if no longer required by other workloads.

- Audit IAM roles to revoke permissions granted specifically for the migration temporary infrastructure.

Failure to remove these components results in continued accrual of hourly charges despite zero traffic volume. Most operators overlook that dangling DNS records can inadvertently redirect traffic back to deleted resources if recreation occurs. Thorough cleanup prevents budget leakage and maintains a strict security posture aligned with private architecture goals.

Operational Best Practices for Stabilizing Private Origin Deployments

Defining Operational Failure Modes for Private VPC Origins

Connection timeouts occur when the managed Elastic Network Interface cannot resolve the target private IP due to missing subnet capacity or security group blocks. Unlike public origin failures where routing is open, private VPC connectivity demands precise security group alignment between the CloudFront service and the customer's internal network. AWS documentation confirms that the ENI requires an available IPv4 address in the destination subnet; exhaustion here triggers immediate connection refusals rather than standard TCP retries. Operators frequently misdiagnose these 5xx errors as application crashes when the root cause is actually infrastructure saturation at the interface level. However, strict adherence to private networking introduces a rigid dependency on subnet availability that public endpoints do not share. A single depleted subnet prevents the ENI from attaching, halting traffic flow entirely regardless of application health. This constraint forces teams to audit IP utilization before migration rather than reacting to outages post-deployment. The operational reality dictates that network teams must treat ENI placement as a hard prerequisite equal to certificate validity. Ignoring this mechanical requirement ensures failure during the transition phase.

according to Applying Monitoring Strategies with CloudWatch and VPC Flow Logs

AWS Documentation, operators must verify CloudFront quotas before migration to prevent immediate connection refusals during peak traffic shifts. Troubleshooting connection timeout events requires correlating CloudWatch metrics like `OriginLatency` with VPC Flow Logs to distinguish application lag from network denial. The managed Elastic Network Interface creates a specific failure domain where missing subnet IP capacity triggers errors distinct from standard public internet routing issues. Security group misconfigurations frequently block the specific ENI interfaces, yet operators often inspect only the load balancer rules while ignoring the new infrastructure layer. InterLIR analysis indicates that without explicit flow log validation, teams cannot confirm if traffic reaches the private subnet or stalls at the virtual firewall boundary.

| Metric Source | Primary Signal | Operational Action |

|---|---|---|

| CloudWatch | Spiking 5xxErrorRate | Verify security group ingress for managed ENI |

| VPC Flow Logs | REJECT records on port 443 | Check subnet IPv4 availability and BPA exclusions |

| Access Logs | `x-edge-result-type` timeouts | Validate SSL/TLS handshake with private origin |

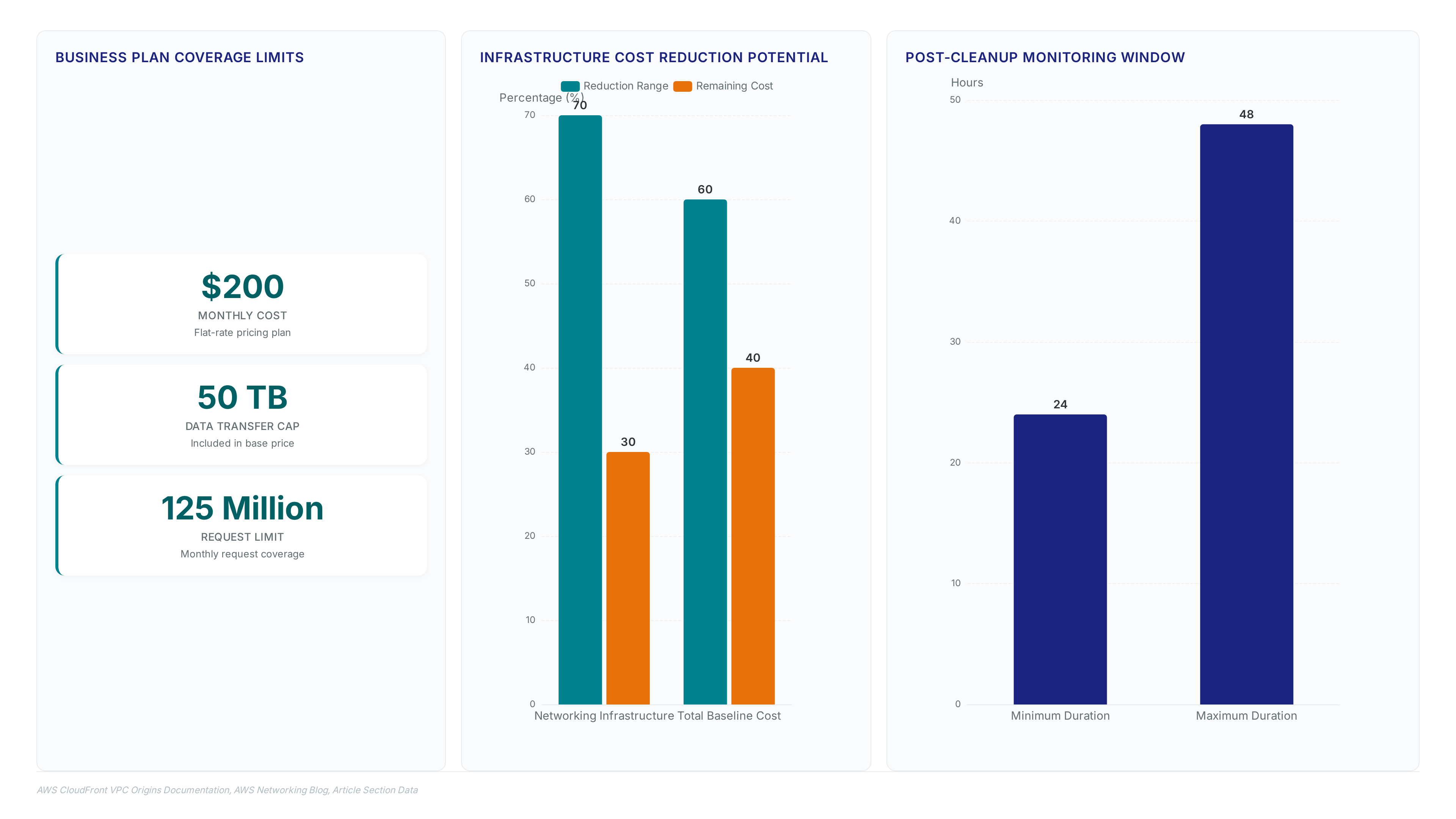

The cost of blind spots is measurable; high-volume sites on the Business flat-rate pricing plan pay $200 per month for coverage up to 50 TB, making undetected outages financially painful. A tension exists between aggressive security tightening and observability; locking down ingress too early blocks the diagnostic traffic needed to validate the path. Most deployment guides omit this feedback loop, leaving operators unable to differentiate between a healthy but silent origin and a broken connection. Proven monitoring validates that the private VPC architecture functions as intended rather than simply assuming connectivity based on configuration state.

Application: Post-Migration Cleanup Checklist to Prevent Cost Leakage

Decommissioning test ALBs and NLBs immediately after DNS cutover prevents billing for idle compute capacity that no longer serves production traffic. Operators must delete these resources only after confirming the CloudFront console reflects exclusive routing to the new private infrastructure. Legacy security groups permitting public ingress create unnecessary attack surfaces and should be removed once VPC origin connectivity is verified.

| Resource Type | Cleanup Action | Validation Method |

|---|---|---|

| Test Load Balancers | Delete via EC2 Console | Verify zero active connections |

| Staging Distributions | Disable or Delete | Check CloudFront metrics |

| Public Security Groups | Revoke Ingress Rules | Audit firewall state |

InterLIR advises teams to monitor AWS Cost Explorer for 24–48 hours post-cleanup to confirm charge cessation for decommissioned assets. High-volume sites often apply the Business flat-rate pricing plan at $200/month, which covers up to 125 Million requests and 50 TB of transfer. A critical tension exists between rapid resource deletion and billing latency; removing assets before final validation risks irreversible data loss if rollback becomes necessary. The Origin shield configuration remains valid for private origins, yet unused test distributions consuming quota must be purged to maintain deployment agility. Operators frequently overlook the cost of lingering EC2 instances used solely for connectivity testing during the transition phase. Final verification requires cross-referencing AWS Shield Standard logs to ensure no orphaned endpoints remain exposed to the public internet.

About

Vladislava Shadrina Customer Account Manager at InterLIR brings a unique perspective to the complexities of CloudFront network architecture. While her daily work focuses on optimizing IPv4 resource allocation and ensuring clean BGP routes for global clients, she deeply understands the critical importance of secure, efficient network design. At InterLIR, a Berlin-based leader in IP address marketplace solutions, Vladislava assists organizations in navigating infrastructure challenges that directly parallel the migration from public to private VPC origins. Her expertise in managing client accounts requires a granular understanding of how network availability impacts business continuity. By connecting the dots between reliable IP resources and reliable origin shielding, she highlights why securing application layers is vital. This article leverages her frontline experience helping IT sectors scale securely, demonstrating how proper architectural choices in AWS environments complement the fundamental stability provided by trusted IP resource management.

Conclusion

Scaling VPC origins exposes a dangerous operational blind spot: the inability to distinguish between a silent, healthy origin and a severed connection without active, synthetic probing. While flat-rate pricing models mask minor inefficiencies, they create a false sense of security where undetected outages silently burn through included quotas, turning reliability failures into immediate capacity crises. The industry is rapidly pivoting from treating CDNs as mere caching layers to using them as thorough security perimeters, a shift that demands rigorous validation before the 2025-2026 rollout of native mTLS and AI-driven traffic analysis renders manual checks obsolete.

Organizations must mandate a strict 48-hour post-migration audit window before finalizing any architecture change. Do not rely on configuration state alone; verify actual data plane continuity. If your team cannot prove connectivity via independent monitoring paths, assume the deployment is compromised. This discipline prevents the accumulation of "zombie" infrastructure that inflates costs and expands attack surfaces.

Start by auditing your AWS Cost Explorer anomalies specifically for idle compute resources tagged as "test" or "staging" that were created in the last 30 days. Cross-reference these findings against your current security group ingress rules immediately to ensure no legacy public access points remain open. Eliminating these orphaned assets today secures your budget and reduces your exposure before automated threat landscapes evolve beyond manual remediation capabilities.