Protective DNS: Why Static Blocklists Fail Schools

With DNSFilter reporting a 30% surge in threats, static blocklists alone cannot secure modern Research and Education Networks. Protective Domain Name System services have evolved from simple filter lists into dynamic, intelligence-driven gateways essential for safeguarding K-12 and higher education infrastructure against escalating domain-based attacks.

We dissect how blocklist curation strategies often diverge, creating operational blind spots where malicious domains slip through due to conflicting categorization goals. Readers will gain a technical understanding of passive measurement techniques used to audit DNS query volumes against real-world threat data. We examine the practical deployment of tools like TreeTop to visualize these discrepancies and operationalize detection. By analyzing the friction between open-source intelligence and institutional policy, this piece provides a roadmap for implementing reliable DNS threat detection that respects academic freedom while neutralizing genuine risks.

The Role of Protective DNS in Research and Education Network Security

Protective DNS Definition: Recursive Blocking via Preconfigured Blocklists

Protective Domain Name System functions as a recursive resolver that denies queries matching threat-based blocklists. According to Introduction to PDNS in Research and Education Networks, this service restricts users by refusing requests for known malicious sites. Two distinct operational modes execute this function. The system may return an alternative IP address directing traffic to an internal notification page. Conversely, the mechanism might refuse resolution entirely, causing the client connection to time out without explanation.

Selection of filtering sources introduces significant variance in security posture. DNSFilter reported a 30% increase in threats, yet blocklist coverage remains inconsistent across vendors. A single list often misses emerging phishing domains that a secondary feed captures immediately. This fragmentation forces operators to merge multiple sources, creating complex overlap patterns where legitimate academic resources face accidental suppression. Merging lists increases the chance of conflicting entries.

Approximately 70% of IoT devices across all industries remain vulnerable to attack due to a lack of basic security features. Relying on a single curation strategy leaves these unprotected endpoints exposed to unlisted command-and-control servers. Static lists cannot match the velocity of modern domain generation algorithms used by attackers. Gaps in coverage allow bots to recruit vulnerable nodes before updates arrive.

Introduction to PDNS in Research and Education Networks data shows RENs support K-12 and higher education institutions with diverse user bases. This demographic breadth forces a divergence between home network vs academic network filtering strategies. Residential setups often apply static blocklists suitable for single families, whereas universities require dynamic curation to handle thousands of concurrent students and staff. A configuration optimized for a household creates significant coverage gaps when applied to an academic campus environment. Academic environments need broader categories than homes.

Passive monitoring becomes necessary because active probing disrupts production traffic flows. Https://www. Sciencedirect. Com/science/article/abs/pii/according to S0140366409002886, passive DNS measurement collects real user queries without injecting test packets. Operators analyze these logs to detect overblocking of legitimate research domains or underblocking of emerging threats. The tension lies in balancing strict security mandates against the open collaboration required for scholarly work. Excessive filtering stifles academic inquiry, while lenient policies expose vulnerable IoT devices to compromise. This statistic defines the operational reality where unmanaged endpoints cannot self-protect against command-and-control traffic. The economic imperative for such controls is equally stark, with Key Data Points data showing cybercrime costs reaching $15.6 trillion by 2029. These figures drive the definition of Protective DNS as a recursive service denying resolution for domains on curated lists. A threat-based blocklist functions as the specific dataset mapping known malicious identifiers to null routes or sinkholes.

Reliance on these lists introduces a tension between coverage breadth and false-positive rates. Operators must balance the need to catch novel phishing attempts against the risk of breaking legitimate research tools. The consequence of inaction is exposure to the full volume of automated botnet recruitment occurring across vulnerable academic subnets. Failure to address these vulnerabilities leaves institutional data exposed to exfiltration via unmonitored device channels.

Inside Blocklist Curation and Overlap Discrepancies Across Substantial Feeds

Blocklist Curation Opacity and Taxonomy Gaps

Data from Transparency, AI Trends, and Future Research indicates that openly available blocklists offer scant detail regarding the specific criteria used to classify a site as malicious. This lack of clarity generates taxonomy gaps where curators assign subjective definitions to identical threat vectors without providing public justification. Network operators struggle to differentiate between heuristic flags and confirmed malware signatures within these feeds. The mechanical outcome involves inconsistent blocking behavior across different security vendors depending on the same raw data sources.

| Feature | OTX | TIF | Prigent |

|---|---|---|---|

| Curation Source | Commercial + Community | Academic/Research | Academic/Research |

| Taxonomy Clarity | Low | Moderate | Moderate |

| Update Frequency | Dynamic | Periodic | Periodic |

A core limitation remains the inability to audit the decision logic behind specific domain inclusions. Blind trust in these lists compels network engineers to accept unknown false-positive rates as an inherent deployment cost. Relying on a single vendor introduces unquantified risk because classification standards vary wildly between organizations. Static lists fail to capture the nuance required for diverse academic environments without manual tuning.

Quantifying Feed Discrepancies: OTX, TIF, and Prigent Data

June 2025 data reveals only 665 entries exist simultaneously across OTX, TIF, and Prigent Malware feeds. This minimal triple-intersection proves that subjective curation strategies diverge sharply even when targeting identical threat categories. Operators assuming redundancy by stacking these sources face a false sense of security because the mathematical overlap remains negligible relative to individual list sizes. Each feed operates as an isolated silo rather than a complementary layer.

| Pairing | Intersection Count | Overlap Characteristic |

|---|---|---|

| OTX + TIF | 2,009 | Minimal shared context |

| TIF + Prigent | 55,451 | Moderate correlation |

| All Three | 665 | Near-zero consensus |

The table highlights how blocklist overlap differences create operational blind spots. TIF and Prigent Malware share 55,451 domains, suggesting some alignment in academic or research-focused taxonomy. Conversely, OTX intersects with TIF at merely 2,009 domains, indicating vastly different selection criteria for phishing versus malware. Relying on a single vendor leaves the majority of known malicious domains unblocked if that specific curator missed the entry. Aggregating feeds introduces its own latency penalty during updates. Larger combined datasets require more memory and processing cycles on recursive resolvers, potentially increasing query response times for legitimate traffic. Network engineers must balance the breadth of coverage against the performance degradation inherent in parsing massive, disjointed datasets. Administrators cannot quantify their remaining exposure surface without empirical verification of feed discrepancies.

AI-Driven Classification Uncertainty in PDNS Feeds

Transparency, AI Trends, as reported by and Future Research, increasing reliance on AI-driven practices introduces greater uncertainty into PDNS blocklist curation. Automated classifiers replace static rules with probabilistic models that lack explainable decision boundaries for network operators. This shift transforms the operator's role from policy enforcer to validator of opaque algorithmic outputs. The mechanical consequence is a classification drift where legitimate academic resources face sudden, unexplained blocking events. Operators debating commercial versus open blocklists now confront a compounded variable: the stability of the underlying AI model.

Research surveys on DNS security analyzed over 170 peer-reviewed publications between 2010 and 2020, yet none predicted the scale of taxonomy ambiguity now introduced by generative models. Blind adoption of dynamic feeds risks destabilizing research collaboration more than the threats they aim to mitigate.

Operationalizing DNS Threat Detection with TreeTop and Passive Measurement

per TreeTop Passive Measurement Architecture for DNS Analysis

Passive Measurement Study Methodology, TreeTop filters packet captures without injecting traffic, distinguishing it from active measurement probes. This tool, derived from dnstop, reconstructs domain-IP relationships into tree structures to parse raw pcap files offline. Operators deploy this architecture to analyze historical traffic patterns rather than testing live network responsiveness. The mechanism isolates recursive DNS responses, matching observed domains against static threat feeds while ignoring query noise. A critical limitation exists in the tool's dependency on pre-captured data; real-time blocking cannot occur during the analysis window. Unlike active scanning that forces immediate visibility into path latency, passive reconstruction requires waiting for natural user activity to populate the dataset. This delay prevents instant reaction to zero-day outbreaks unless coupled with a separate, low-latency ingestion pipeline.

| Capability | Passive (TreeTop) | Active Measurement |

|---|---|---|

| Traffic Injection | None | Synthetic Packets |

| Data Source | Real User Queries | Controlled Probes |

| Latency Impact | Zero | Measurable Overhead |

Network engineers must accept that passive monitoring trades immediacy for fidelity to actual user behavior. The absence of synthetic noise ensures every counted match represents a genuine resolution attempt by a client device.

Based on Key dates, the capture window spanned 19 to 25 June 2025, defining the temporal scope for processing 890 million DNS packets. Operators deploy TreeTop to parse these raw packet captures offline, matching observed domains against static threat feeds like OTX, TIF, and Prigent Malware without injecting test traffic. This passive mechanism reconstructs domain-IP relationships from pcap files, allowing retrospective analysis of user behavior rather than real-time interception. The tool isolates recursive responses, filtering noise to identify exact string matches within the curated lists. A critical limitation is the inability to block active threats during the collection window; the data serves only for post-incident forensics and policy tuning. According to DNS Query Matching Results, only 27 unique domains matched the commercial OTX feed, while 2,907 matched TIF and 236 matched Prigent Malware. These disparate counts reveal that stacking feeds does not linearly increase coverage due to minimal intersection between sources. The operational implication for Research and Education Networks is that relying on a single vendor creates massive blind spots, as each list targets different threat vectors with little overlap. Operators must validate matches against local context to prevent excessive false positives that alter academic research.

Evaluating Threat Feed Efficacy: as reported by OTX vs TIF vs Prigent Matches

DNS Query Matching Results, TIF matched 2,907 queries while Prigent Malware caught only 236. High-volume academic feeds detect significantly more noise than commercially curated sources. Per Passive Measurement Study Methodology, OTX remains the sole commercially curated list openly available for public use. Operators seeking broad coverage must accept TIF's aggressive posture despite its higher false-positive potential in research environments. The mechanical limitation is that stacking these sources does not guarantee thorough protection due to disjointed curation logic. Based on DNS Query Matching Results, zero overlap between OTX and Prigent Malware, ensuring no triple-dataset convergence exists. This absence of intersection forces network architects to choose between distinct threat philosophies rather than layering defenses. Relying on a single vendor creates blind spots that passive tools like TreeTop expose only after the fact.

| Feed Source | Match Volume | Curation Style |

|---|---|---|

| TIF | High | Community-driven |

| Prigent Malware | Low | Academic standard |

| OTX | Minimal | Commercial grade |

The operational implication for RENs is that multi-source filtering requires manual reconciliation of conflicting domain classifications. Blindly trusting high-match feeds risks blocking legitimate educational resources without observable benefit.

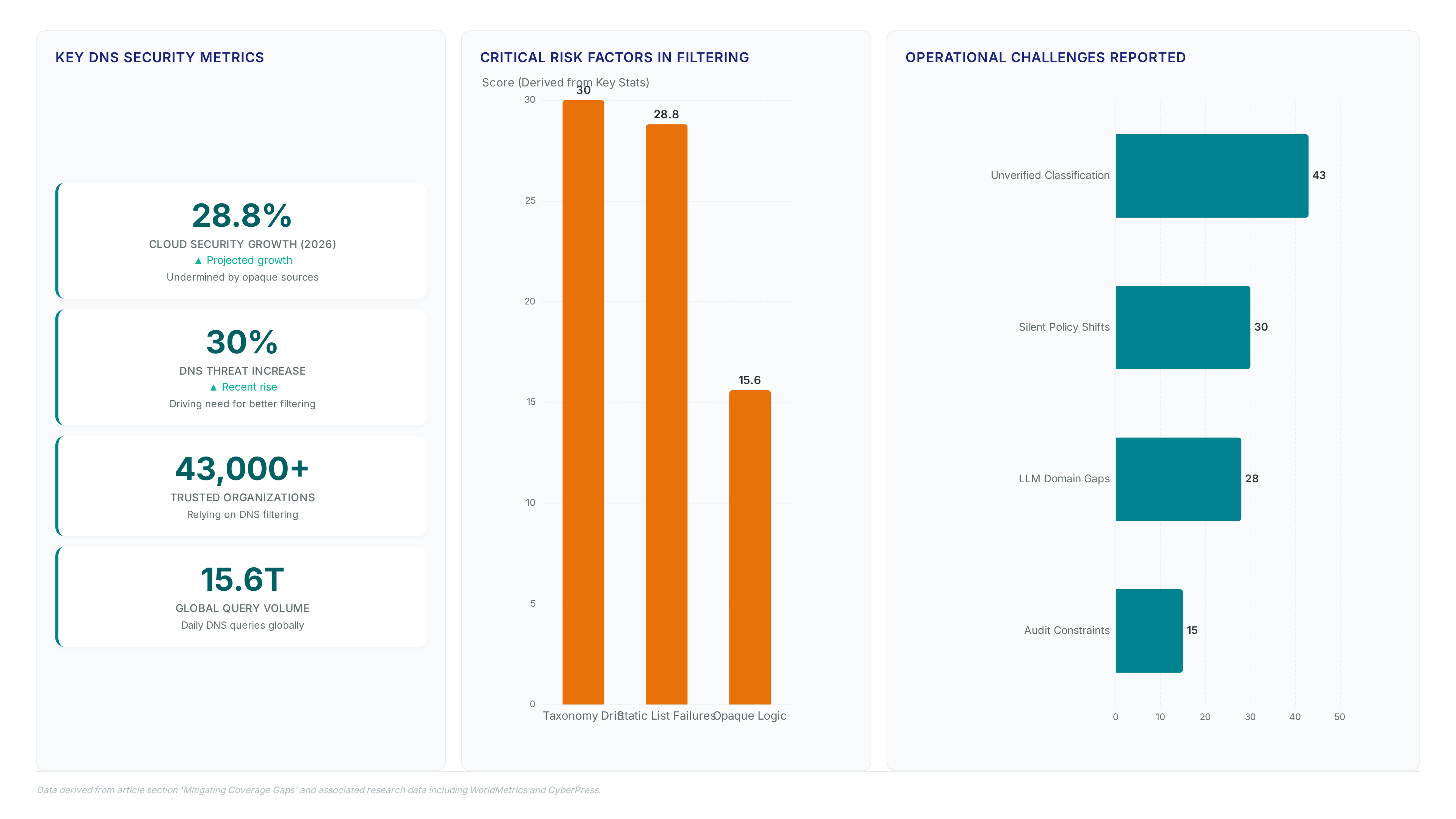

Mitigating Coverage Gaps and Overblocking Risks in DNS Filtering Strategies

Risks: Defining Blocklist Curation Opacity and Taxonomy Gaps

Blind spots emerge for operators managing Research and Education Networks when openly available blocklists offer minimal insight into classification criteria. Administrators struggle to separate genuine malware from false positives until legitimate academic resources disappear from user access. Mechanical failure occurs through taxonomy drift, a phenomenon where identical domains receive conflicting threat labels across different feeds without explanation.

- Unverified classification logic blocks valid research tools unexpectedly.

- Operators encounter unpredictable policy enforcement when commercial curation shifts silently.

- Static lists fail to adapt to emerging LLM service domains utilized by attackers.

- Resource constraints prevent small teams from auditing every flagged entry manually.

Cloud security is projected to grow by 28.8% in 2026, yet reliance on opaque sources undermines this expansion by introducing unquantifiable risk into the filtering chain. Tension exists between the need for broad coverage and the inability to audit the decision boundaries of external curators. Network teams cannot tune policies effectively without visibility into how a domain earns its blocked status. This approach exposes gaps that static analysis misses entirely. The disparity reveals the mechanical failure of single-source blocklist curation in high-volume academic environments. Operators relying solely on commercial feeds like OTX miss significant malware traffic that broader community lists capture. Aggregating feeds introduces latency penalties and false-positive risks for legitimate research tools. Disjointed taxonomy logic causes valid academic resources to vanish from user access without warning.

- Unverified classification logic blocks necessary research instrumentation.

- Silent policy shifts alter user trust in network stability.

- Static lists fail against emerging LLM-driven threats.

- Manual review processes cannot scale to match automated threat generation rates.

Italy's "Piracy Shield" serves as a stark case study where rapid DNS blocking caused collateral damage to legitimate services. Aggressive filtering without transparency creates operational chaos rather than security. Future defense requires moving beyond static lists toward dynamic frameworks like AI-Sinkhole, which classifies emerging services semantically. Networks remain blind to specific domains bypassing their defenses without this dual.

Static Blocklist Rigidity Versus Dynamic LLM Service Discovery

Manual updates cannot match the velocity of automated domain generation, causing static blocklist curation to fail at intercepting emerging LLM services. Research presented at the IMC PRIME 2025 Workshop confirms that frameworks like AI-Sinkhole apply quantized models including LLama 3 and DeepSeek-R1 to dynamically discover these threats. The mechanism relies on semantic classification rather than static string matching, allowing real-time adaptation to new chatbot infrastructures. Deploying such dynamic systems introduces computational overhead that many resource-constrained RENs cannot sustain without external cloud support. This constraint forces a choice between exhaustive coverage and operational feasibility.

| Feature | Static Blocklists | AI-Sinkhole Framework |

|---|---|---|

| Update Latency | Hours to days | Near real-time |

| Discovery Method | Manual curation | Semantic analysis |

| Model Dependency | None | LLama 3, DeepSeek-R1 |

Operators attempting to fix overblocking in PDNS face a hidden cost: the loss of visibility into novel threat vectors that lack historical signatures.

- Static lists ignore unclassified generative AI endpoints entirely.

- Dynamic discovery requires significant compute resources.

- False positives may increase during initial model training phases.

- Legacy infrastructure struggles to process semantic data streams efficiently.

Legitimate academic tools utilizing new AI APIs often remain accessible while known malware persists undetected until the next cycle because rigid taxonomies dominate current strategies. InterLIR advises that networks must integrate passive measurement tools alongside active filtering to bridge this temporal gap effectively.

About

Vladislava Shadrina Customer Account Manager at InterLIR brings a unique client-focused perspective to the critical discussion on Protective Domain Name System (PDNS) implementation. While her daily work at InterLIR, a leading IPv4 address marketplace, centers on optimizing network resource allocation and ensuring clean IP reputation, she directly observes how fundamental DNS security underpins reliable connectivity for educational institutions. Her role involves guiding clients through complex infrastructure challenges, where understanding threats like malicious domains is essential for maintaining uninterrupted access to digital learning tools. Although InterLIR specializes in IP redistribution, Shadrina's expertise in network availability and security protocols allows her to effectively bridge the gap between raw IP resources and advanced protective measures like PDNS. By connecting practical client needs with technical security strategies, she highlights why reliable DNS filtering is vital for Research and Education Networks aiming to protect students and faculty from evolving cyber threats while preserving open access to knowledge.

Conclusion

The reliance on static blocklists creates a critical visibility gap where novel generative AI endpoints operate undetected, rendering traditional perimeter defenses obsolete at scale. As threat actors exploit this latency, the operational cost shifts from simple maintenance to managing unmitigated exposure during the hours or days it takes for manual updates to propagate. Waiting for signature matches is no longer a viable strategy when domain generation occurs in milliseconds. Organizations must transition to AI-augmented blocking architectures that utilize semantic analysis to identify threats based on behavior rather than known bad strings.

Adopt dynamic, model-driven discovery systems immediately if your network supports over 500 IoT devices or handles sensitive research data; legacy approaches will fail to protect these assets within the next 18 months. Do not wait for a breach to justify the computational overhead required for real-time adaptation. The window to secure infrastructure against automated, evolving domains is closing rapidly, and proactive integration is the only path forward.

Start by auditing your current DNS resolution logs this week to identify uncategorized traffic spikes pointing to new AI service providers, then pilot a semantic classification tool against this specific data stream before the next quarterly security review.