Reproducible builds beat signature-based failures now

Over 1.2 million malicious packages now plague major repositories, making reproducible builds the only viable defense against supply chain collapse. The March 2026 report from the Reproducible Builds project asserts that deterministic verification is no longer optional but a critical necessity for software integrity. With malware on open-source platforms surging by 73% according to ReversingLabs, the industry must shift from trusting signatures to verifying build outputs mathematically.

Readers will examine how hash-based integrity checking, recently proposed by Eric Biggers and Thomas Weißschuh, resolves the conflict between module signing and third-party rebuildability without relying on complex PKCS#7 infrastructures. The discussion details the mechanics of deterministic verification, specifically how tools like diffoscope diagnose bit-for-bit discrepancies that traditional audits miss. Furthermore, the article analyzes distribution-level implementations, including Lucas Nussbaum's new Debaudit service for validating Debian source packages against upstream repositories.

Sonatype's 2026 data reveals that 454,600 new malicious packages appeared in 2025 alone, underscoring why static keys fail in modern software supply chains. By deploying automated reconstruction via systems like rebuilderd, organizations can detect injected backdoors that signature-based approaches inherently trust. This transition from blind faith to cryptographic proof defines the future of secure software deployment.

The Role of Reproducible Builds in Modern Software Supply Chain Security

Defining Reproducible Builds and Hash-Based Integrity

Reproducible builds generate bit-for-bit identical binaries from identical source inputs, eliminating build-environment variance. This determinism allows operators to verify that a distributed binary matches its source without trusting the builder's identity alone. Current signature-based methods rely on static keys or complex PKI chains, creating friction when third parties attempt rebuilds. Eric Biggers noted on the Linux Kernel Mailing List that signature authentication requires parsing PKCS#7, X. 509, and ASN. 1 structures, adding unnecessary complexity compared to direct hash verification. The proposed mechanism embeds a list of SHA-256 hashes for all modules directly into the `vmlinux` binary within a section named `. Module_hashes`.

Linux Kernel Mailing List data shows Thomas Weißschuh identified signature-based checks as breaking reproducible builds due to key generation conflicts. The signature-based model forces a choice between build-time key randomness, which destroys reproducibility, or static keys, which prevent third-party verification. This binary failure mode blocks deterministic validation of the final binary artifact. A new patch set shared in January 2025 implements an alternate method using build-time hashes to resolve this deadlock. Eric Biggers noted on the Linux Kernel Mailing List that this approach removes dependencies on PKCS#7 and X. 509 parsing logic. The mechanism embeds a hash list directly into the `vmlinux` binary, shifting trust from external keys to the build environment itself. However, this hash-based integrity model assumes the build host remains uncompromised during the compilation window. Unlike signature chains, there is no post-hoc revocation path if the builder is later found malicious. Operators gain bit-for-bit verification but lose the ability to delegate trust via certificate authorities. The trade-off is a narrower attack surface at the cost of requiring higher confidence in the immediate build infrastructure. Production teams must decide if their threat model prioritizes preventing supply-chain injection over maintaining complex PKI hierarchies.

Hash-based integrity replaces complex PKI chains with deterministic build artifacts to validate software provenance. Traditional signature-based models depend on private keys and X. 509 certificates, creating friction when third parties attempt rebuilds. According to Industry Context and Security Trends, the sector is transitioning from a 'visibility era' of static SBOMs to a 'governance era' set by continuous validation. This shift prioritizes automated hash verification over manual signature management to reduce operational overhead.

| Feature | Signature-Based | Hash-Based |

|---|---|---|

| Trust Boundary | Private Key Security | Build Environment Integrity |

| Validation Logic | PKCS#7, ASN. | |

| Rebuild Impact | Breaks reproducibility | Preserves determinism |

| Complexity | High (Crypto APIs) | Low (Digest only) |

Operators must weigh the administrative burden of key rotation against the rigidity of embedded hashes. However, hash-based systems transfer total trust to the initial build environment, meaning a compromised compiler invalidates the entire chain. Quicker detection via automated hashing directly mitigates this financial exposure. The limitation remains that hash lists cannot attest to the identity of the builder, only the content's state. This approach minimizes the attack surface inherent in maintaining long-lived signing keys.

Inside the Mechanics of Deterministic Verification and Diff Analysis

Diffoscope's Content-as reported by Aware Binary Analysis Mechanics

Reproducible Builds project documentation, diffoscope operates as an in-depth, content-aware utility that recursively unpacks archives to analyze binary differences beyond simple text diffs. The tool functions by identifying file types within container formats like tarballs or ISO images, then invoking specific handlers for each layer rather than treating the input as an opaque blob. This recursive decomposition allows engineers to inspect internal structural changes, such as timestamp variations or ordering shifts in directory entries, which often cause build failures.

- The utility detects the outer archive format and extracts contents to a temporary workspace.

- It compares metadata and file lists before diving into byte-level comparisons of matching files.

- Specialized comparators handle distinct formats like ELF binaries, PNG images, and Debian packages.

Per Debian package logs, Chris Lamb uploaded versions 314 and 315 to address testing workflows, ensuring the tool remains stable against evolving distribution policies. Jelle van der Waa adjusted the PGP file detection regular expression to maintain compatibility with LLVM version 22 updates. A significant limitation exists: deep recursion increases analysis time linearly with archive depth, potentially delaying CI/CD pipelines during large-scale audits. Operators must balance granular visibility against processing latency when integrating this tool into automated verification gates.

Based on Diffoscope changelog, Jelle van der Waa fixed compatibility with LLVM version 22 to restore deterministic diffing for Rust artifacts. This update addresses a specific breakage where newer compiler output formats caused false-positive differences during verification cycles. Without this patch, operators attempting to fix unreproducible Rust builds face opaque binary divergences that halt certification pipelines. The tool now correctly parses updated section headers emitted by the upgraded compiler suite. However, according to diffoscope release notes, the same update cycle required adjusting the PGP file detection regular expression, indicating tight coupling between toolchain updates and parsing logic. This dependency creates a synchronization burden where verification tools must evolve in lockstep with compilers. As reported by GNU Guix package repository, Vagrant Cascadian updated diffoscope to version 315 to incorporate these critical fixes for distribution-wide validation.

| Component | Update Trigger | Operational Impact |

|---|---|---|

| LLVM Parser | Version 22 output changes | Prevents false-positive binary diffs |

| PGP Regex | Format variation | Restores signature block detection |

| Rust Handler | Metadata ordering | Enables crate-level verification |

Operators must align verification tool versions with their compiler toolchain releases to maintain audit continuity. Lagging behind on diffoscope updates while upgrading LLVM introduces unresolvable noise into reproducibility reports.

Per Linux Kernel Mailing List archives, Thomas Weißschuh's December 2024 RFC patches identified build-time key generation as a primary reproducibility blocker. Current signature-based workflows force operators to choose between generating unique keys per build, which destroys determinism, or reusing static keys, which compromises security posture. This dichotomy prevents third parties from verifying that distributed binaries match published source code exactly. The proposed hash-based alternative eliminates this conflict by embedding SHA-256 digests directly into the kernel image rather than relying on external cryptographic signatures.

- Generate module code from source inputs.

- Compute deterministic hashes for each object file.

- Embed the resulting list into the `. Module_hashes` section.

This sequence ensures that identical source inputs always yield identical binary outputs without requiring secret key management during the compilation phase. However, adopting this method requires disabling legacy `MODULE_SIG` configurations, creating a temporary interoperability gap with older bootloader ecosystems that expect traditional signature blocks. Operators managing mixed-environment fleets must coordinate kernel upgrades across infrastructure to avoid boot failures on nodes lacking hash-verification support. The shift fundamentally alters the trust boundary from private key secrecy to build environment integrity.

based on Debaudit's Role in Verifying Debian Source Package Fidelity

Distribution work, Lucas Nussbaum announced Debaudit as a new service to verify the reproducibility of Debian source packages. This mechanism targets the Vcs-Git repository layer, ensuring the source package remains a faithful representation of upstream code before binary compilation occurs. Unlike reproduce. Debian. Net, which validates that binaries match their sources, this tool confirms the source archive itself has not been tampered with during packaging. However, relying on this verification requires strict alignment between packaging scripts and upstream tags, a frequent point of failure in complex maintainer workflows. If the packaging metadata diverges from the remote Git history, the fidelity check fails even if the code is safe. This creates a tension between automated fidelity enforcement and the flexibility maintainers need for patch management.

| Scope | Validation Target | Failure Mode |

|---|---|---|

| Debaudit | Source vs. | |

| Rebuilderd | Binary vs. |

Installing Rebuilderd 0.26.according to 0 for Repository Monitoring

Tool development, rebuilderd version 0.26.0 released with a complete database redesign to enable smoother onboarding for repository monitoring. This server monitors official package repositories of Linux distributions by attempting to reproduce observed build results against declared sources. The mechanism operates by fetching source packages, executing them in isolated environments, and comparing output hashes to detect non-determinism or tampering. Operators gain the ability to continuously validate supply chain integrity without manual intervention. However, this continuous validation requires significant compute resources that many smaller teams cannot provision immediately.

Distribution work, Debian plans to block non-reproducible packages in Debian 14 "Forky", creating immediate conflict with signature-based module verification. Current workflows often generate unique signing keys at build time, a practice that destroys determinism and triggers these new enforcement filters. Operators must transition to hash-based integrity or static keying strategies to avoid rejection from the archive. Static keys allow reproducibility but reduce security posture, whereas dynamic keys secure the supply chain but fail the reproducibility checks. Diffoscope serves as the primary diagnostic utility for resolving these failures before submission. Engineers apply the tool to perform content-aware diffs between expected and actual build outputs. * Identify non-deterministic timestamps embedded in binary headers. * Isolate random ordering issues in compiler-generated maps. * Pinpoint exact byte offsets where divergence occurs. * Validate that source patches apply cleanly without drift. A significant caveat exists: fixing one source of non-determinism often exposes latent ordering dependencies in the toolchain. Teams ignoring this phase risk total build pipeline failure when the blocking policy activates. The cost of delayed remediation exceeds the effort of early adoption.

Operationalizing Upstream Contributions and Mitigating Cache Poisoning Risks

Python Cache Poisoning Mechanics in Supply Chains

Academic paper by Marc Ohm et al. Data shows this is the first investigation into cache poisoning manipulating bytecode to execute malicious code without altering human-readable source files. This mechanism targets the `__pycache__` directory, allowing attackers to inject harmful logic that bypasses standard source-code audits entirely. A proof of concept demonstrates an attacker can inject malicious bytecode into a cache file without failing the Python interpreter's integrity checks. Data shows about 12,500 packages on the Python Package Index are distributed with these vulnerable cache files included. The operational reality forces a choice between trusting pre-compiled artifacts or enforcing strict build-from-source policies.

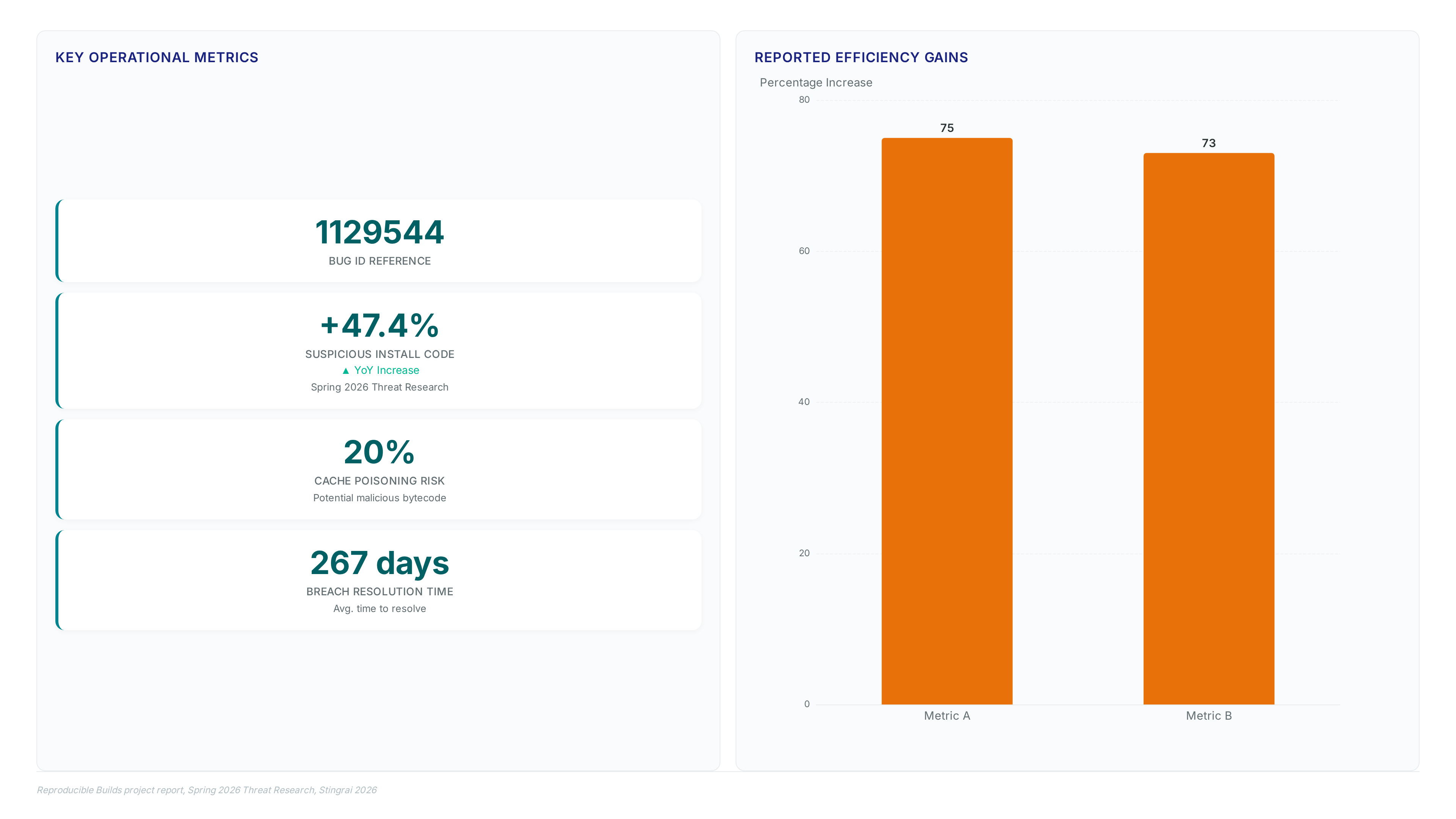

Reproducible Builds project report, Chris Lamb filed bug #1129544 against python-nxtomomill to resolve build non-determinism. Operators must first isolate the variance source, often a randomized data structure or timestamp, before drafting a fix. The mechanism requires replacing nondeterministic functions with seeded equivalents or sorting unordered collections prior to serialization. This direct intervention prevents downstream cache poisoning where malicious bytecode hides in unverified artifacts. However, submitting these patches demands deep familiarity with upstream tooling that many network teams lack. The cost is measurable engineering time spent navigating external contribution workflows rather than configuring local firewalls. 1.

About

Vladislava Shadrina Customer Account Manager at InterLIR, brings a unique perspective to the critical discussion on Reproducible Builds. While her daily work focuses on managing client relations and ensuring secure IPv4 resource allocation, she understands that modern network security extends far beyond clean IP reputation. As InterLIR helps organizations build reliable network infrastructures, the integrity of the software supply chain becomes paramount. The staggering 47.4% increase in suspicious install code in e's Spring 2026 Threat Research highlights why infrastructure managers must care about build reproducibility. Shadrina connects her experience in maintaining transparent, secure transactions to the broader need for verifiable software artifacts. Just as InterLIR guarantees clean BGP routes to prevent hijacking, Reproducible Builds ensure code has not been tampered with during compilation. This article bridges her expertise in resource security with the urgent necessity of protecting the software development lifecycle from the rising tide of malicious packages identified in recent industry reports.

Conclusion

Scaling reproducible builds reveals a critical breaking point: hash verification becomes useless if the compiler binary itself is compromised before the initial build. While upstream maintainers struggle with the entropy of non-deterministic code, attackers are bypassing source audits entirely by injecting malicious bytecode directly into cache files. This shift means your integrity checks might pass on a fully infected artifact, creating a false sense of security that delays breach resolution by months. The operational cost here is not just technical debt; it is the silent accumulation of trusted but poisoned dependencies across your entire supply chain.

Organizations must stop waiting for universal upstream adoption and immediately enforce local hash verification policies backed by ephemeral, clean-room build environments. Do not trust distributed packages containing pre-existing cache files under any circumstances. By Q4 2027, every critical infrastructure team should mandate isolated compilation containers that strip all `__pycache` directories prior to execution. This timeline is aggressive because the window for detecting these injections before they become endemic is closing rapidly.

Start this week by auditing your current build hosts for unauthorized cache artifacts and implementing a strict policy to delete them before compilation begins. This single action disrupts the primary vector for creative code injection today.